Models on a variety of different datasets and robustness challenges. The main difference from your approach is, that the expected value is taken over the whole X × Y X × Y domain (taking the probability pdata(x, y) p d a t a ( x, y) instead of pdata(yx) p d a t a ( y x) ), therefore the conditional cross-entropy is not a random variable, but a number. Models with deterministic models and Variational Information Bottleneck (VIB)

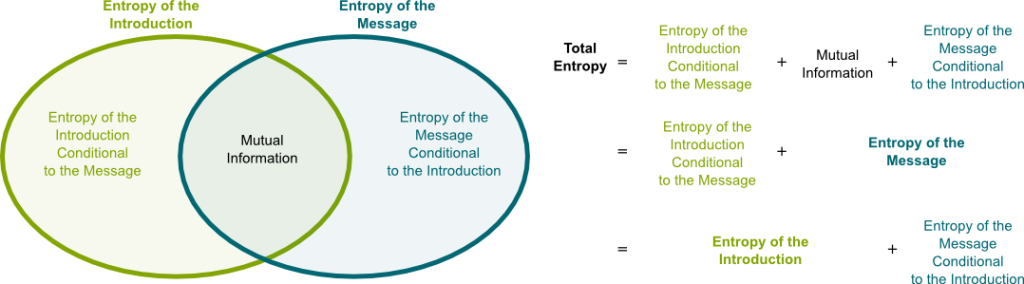

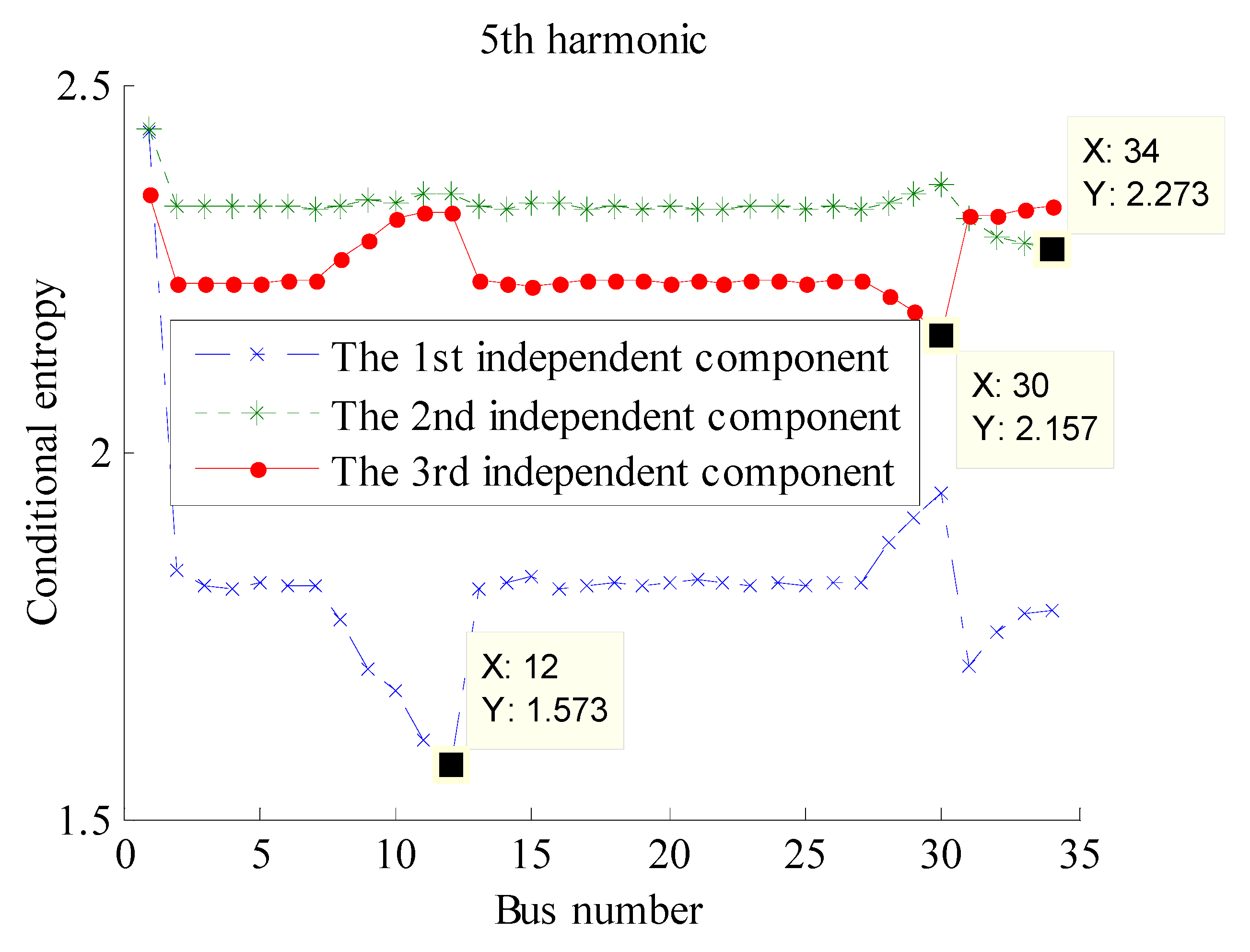

We experimentally test our hypothesis by comparing the performance of CEB In order to train models that perform well with respect to the MNIĬriterion, we present a new objective function, the Conditional Entropyīottleneck (CEB), which is closely related to the Information Bottleneck (IB). The Minimum Necessary Information (MNI) criterion for evaluating the quality ofĪ model. Much information about the training data. And in lemma 2.1.2: Hb(X) (logb a)Ha(X) Proof: logbp logba\ logap. We hypothesize that theseįailures to robustly generalize are due to the learning systems retaining too If the base of the logarithm is b, we denote the entropy as Hb(X).If the base of the logarithm is e, the entropy is measured in nats.Unless otherwise specified, we will take all logarithms to base 2, and hence all the entropies will be measured in bits. If Y is not supplied the function returns the entropy of X - see entropy. Entropy is measured between 0 and 1.(Depending on the number of classes in your dataset, entropy can be greater than 1 but it means the same thing, a very high level of disorder. condentropy takes two random vectors, X and Y, as input and returns the conditional entropy, H(XY), in nats (base e), according to the entropy estimator method. Robust generalization, which extends the traditional measure of generalizationĪs accuracy or related metrics on a held-out set. Example of Conditional Entropy Conditional Probability, Information Theory, Thermodynamics, One Coin, Entropy. Equation (2.3) is the chain rule for entropy for two random variables, but it can easily. With this property, the corresponding conditional entropy of a state can be written as a maximization of a noncommutative Hermitian polynomial in some operators V 1,, V m evaluated on the. This is considered a high entropy, a high level of disorder ( meaning low level of purity). Out-of-distribution (OoD) detection, miscalibration, and willingness to Modes, including vulnerability to adversarial examples, poor

Download a PDF of the paper titled The Conditional Entropy Bottleneck, by Ian Fischer Download PDF Abstract: Much of the field of Machine Learning exhibits a prominent set of failure Conditional entropy H (mif) defines the expected amount of information the set m carries with respect to feature f and movement mi.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed